TEM vision software

m (→External Links) |

m (→Videos) |

||

| Line 24: | Line 24: | ||

<div style="width:480px;"> | <div style="width:480px;"> | ||

<embed style="width:480px; height:500px;" id="VideoPlayback" type="application/x-shockwave-flash" | <embed style="width:480px; height:500px;" id="VideoPlayback" type="application/x-shockwave-flash" | ||

| − | src="http://vision.eng.shu.ac.uk/jan/flv/flvplayer.swf" width="480" height="500" flashvars="file=http://vision.eng.shu.ac.uk/jan/flv/tem.xml&shuffle=false&repeat=false&displayheight=418" pluginspage="http://www.macromedia.com/go/getflashplayer"/> | + | src="http://vision.eng.shu.ac.uk/jan/flv/flvplayer.swf" width="480" height="500" flashvars="file=http://vision.eng.shu.ac.uk/jan/flv/tem.xml&shuffle=false&repeat=false&displayheight=418&searchbar=false" pluginspage="http://www.macromedia.com/go/getflashplayer"/> |

<div class="thumbcaption" >Demonstration of <b>TEM vision software</b> including telemanipulation as well as closed-loop control using machine-vision feedback (also available as DivX3 videos <a href="http://vision.eng.shu.ac.uk/jan/configuration.avi">configuration.avi (64 MByte)</a>, <a href="http://vision.eng.shu.ac.uk/jan/closed-loop.avi">closed-loop.avi (44 MByte)</a>, and <a href="http://vision.eng.shu.ac.uk/jan/interaction.avi">interaction.avi (19 MByte)</a>)</div></div></div></center></html> | <div class="thumbcaption" >Demonstration of <b>TEM vision software</b> including telemanipulation as well as closed-loop control using machine-vision feedback (also available as DivX3 videos <a href="http://vision.eng.shu.ac.uk/jan/configuration.avi">configuration.avi (64 MByte)</a>, <a href="http://vision.eng.shu.ac.uk/jan/closed-loop.avi">closed-loop.avi (44 MByte)</a>, and <a href="http://vision.eng.shu.ac.uk/jan/interaction.avi">interaction.avi (19 MByte)</a>)</div></div></div></center></html> | ||

|- | |- | ||

Revision as of 20:51, 11 May 2010

Prototype using Distributed Ruby for vision-based closed-loop control |

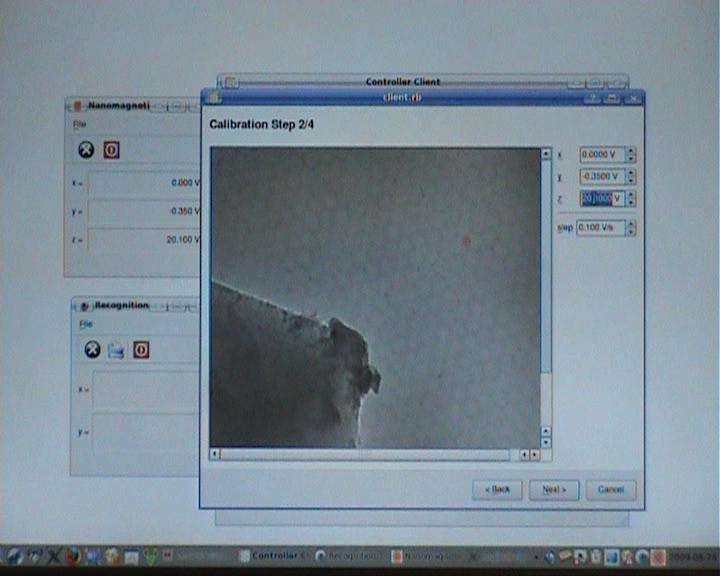

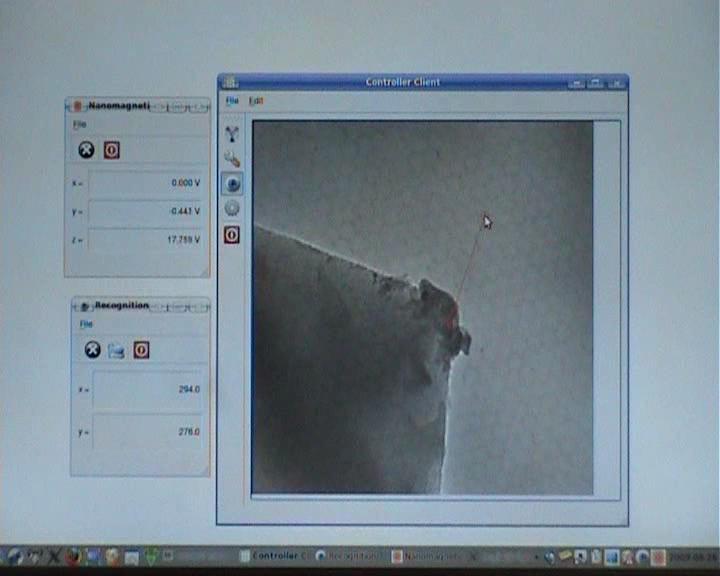

As part of the Nanorobotics project a TEM vision software was developed. The software makes use of a JEOL 3010 transmission electron microscope with a TVIPS FastScan-F114 camera which is an IIDC/DCAM-compatible firewire camera. The nano-indenter is controlled by a Nanomagnetics SPM controller (the old version of the controller can be accessed with a PCI-DIO24 card).

The software runs under GNU/Linux and it makes use of Damien Douxchamps' libdc1394 to access the camera and Warren Jasper's PCI-DIO24 driver to access the PCI-card which interfaces with the SPM controller.

The software was implemented in Ruby using Qt4-QtRuby, HornetsEye, libJIT, and a custom Ruby-extension to access the SPM controller via the PCI-DIO24 card. Distributed Ruby and multiple processes were used to work around the problem that Ruby-1.8 does not offer native threads.

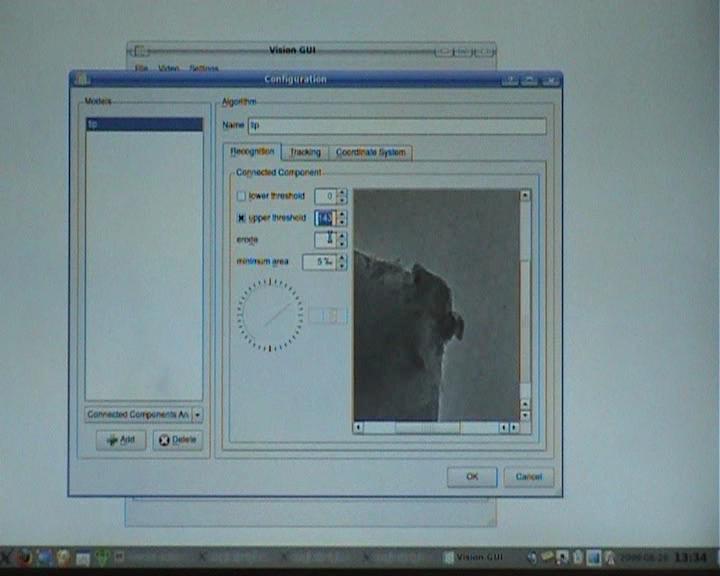

The vision algorithms are configured using a separate program and the configuration is saved in a file using Ruby marshalling. A plugin-based architecture, which accepts plugins for recognition and tracking, was implemented which allows one to select and configure Normalised Cross-Correlation, Lucas-Kanade tracking, or Connected Component Analysis.

Contents |

Demonstration

Videos

Demonstration of TEM vision software including telemanipulation as well as closed-loop control using machine-vision feedback (also available as DivX3 videos configuration.avi (64 MByte), closed-loop.avi (44 MByte), and interaction.avi (19 MByte)) |

Setup procedure

Download

The software can be downloaded here: visiongui-0.2.tar.bz2

The software is implemented in Ruby. It uses HornetsEye (current development of version 0.32) and Qt4-QtRuby.

Future Work

Possible future work is

- port to Ruby-1.9 which has native threads

- integrate serial-port interface of JEOL TEM

- access USB-controls for shutter and gain of the TVIPS camera

- feature-based recognition and tracking (less sensitive to brightness changes)

- offset- and gain-compensation for camera image

See Also

External Links

- Hardware

- Software

- Related publications

- Jung-Me Park, C. G. Looney, Hui-Chuan Chen: Fast connected component labeling algorithm using a divide and conquer technique, 15th International Conference on Computers and their Applications, March, 2000, pp. 373-6

- J. P. Lewis: Fast Normalized Cross-Correlation, Industrial Light & Magic

- S. Baker, I. Matthews: Lucas-Kanade 20 Years On: A Unifying Framework, International Journal of Computer Vision, Vol. 56, No. 3, March, 2004, pp. 221-255.